There’s a certain kind of cultural panic that tells you more about the panickers than about the thing they are panicking about. The current hysteria over AI-inflected prose is a good example.

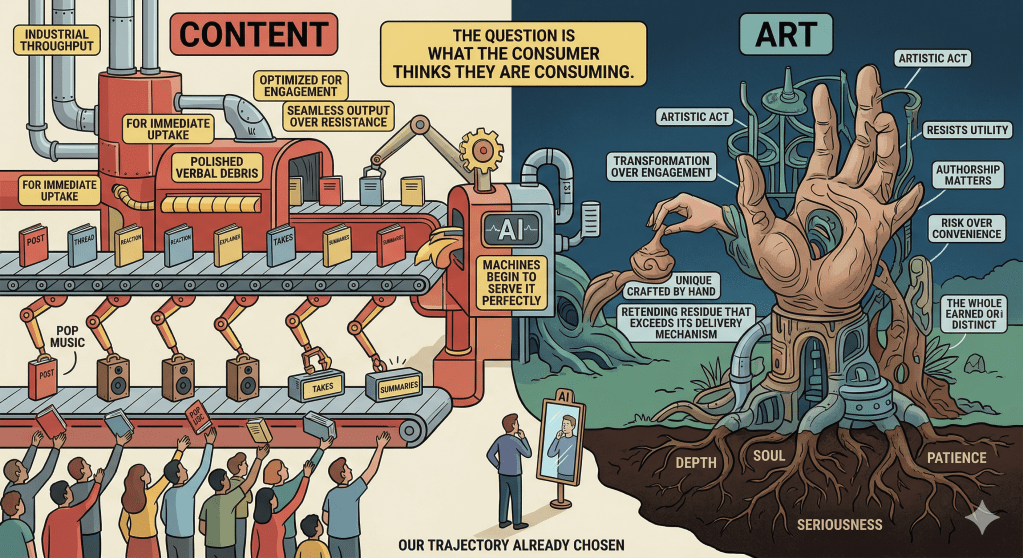

The argument, insofar as it deserves the name, goes roughly like this: LLMs produce prose with identifiable features – a certain blandness, a fondness for the em dash, a tendency toward tidy three-part structure. Writers who use these tools risk absorbing those features. The authentic human voice is therefore under threat. Something precious is being diluted by contact with the machine.

This is sentimental rubbish, and it is worth saying so clearly before doing anything else – and a sort of virtue signalling.

I use LLMs daily. For research, for editorial pushback, for smoothing passages that have gone awry. This means I spend hours a day reading a particular kind of output. You’d have to be delusional not to admit it has effects. Certain phrasings start feeling natural that didn’t before. Certain rhythms begin to recur. Certain words might not have otherwise come into use. I notice this and note it without particular alarm, because I’ve read enough to know that this is just what environments do.

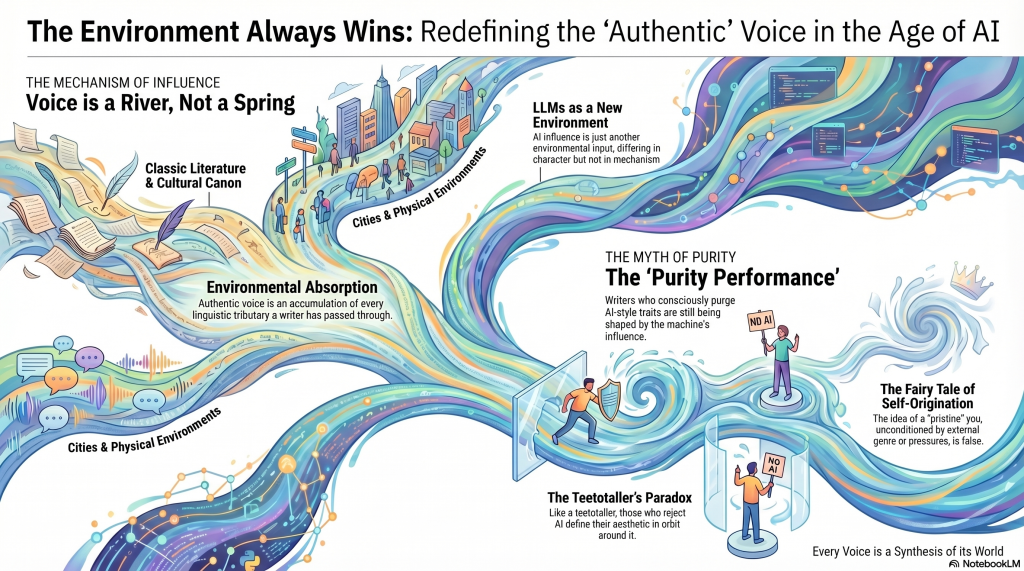

Read nothing but McCarthy for a month, and your sentences will start hunting for the spare declarative. Spend a year editing academic philosophy, and you will catch yourself reaching for ‘insofar as’ and ‘it’s worth noting’ in casual conversation. Live in a city long enough, and its cadences work their way into your syntax. This isn’t contamination, the negative moralist dispersion. It’s how language acquisition works for as long as one is alive and reading. Voice isn’t a spring. It’s a river, a moving accumulation of every tributary it has passed through.

The prestige game being played by the anti-LLM faction isn’t difficult to spot. When Dostoyevsky shapes a young writer’s cadence, we call it influence and treat it as evidence of a serious literary education. When a game world shapes a child’s imagination – I homeschooled my son in the manner of unschooling, and his primary corpus for years was World of Warcraft and its attendant lore before shifting to Dark Souls – and that child ends up reading Dante and Milton unprompted in year seven, the same mechanism has clearly operated. The source was not canonical, the outcome was. But the respectable hierarchy of influences cannot easily accommodate this, because the hierarchy was never really about the mechanism. It was about the cultural status of the inputs.

The more interesting observation isn’t about those of us who use these tools. It’s about those who conspicuously do not.

A minor genre has emerged – charitably, I’ll call it a genre because cult feels morally loaded – consisting of writers anxiously purging their prose of anything that might read as AI-generated. It’s worth noting that they have read the lists. Telltale signs of LLM authorship: excessive hedging, em dashes, transitional summaries, the phrase ‘it is worth noting’. And so they scrub, redact, replace, and perform a kind of stylistic hygiene that’s a creative decision made in direct response to LLM discourse.

These writers aren’t free of the machine’s influence. They’re among the most thoroughly shaped by it. They simply have the more theatrical relationship – the counter-imitator, the purity-performer, the one who reorganises their entire aesthetic in orbit around the thing they claim to reject.

Thomas Moore, in Care of the Soul, observes that a child raised by an alcoholic parent tends to become either an alcoholic or a committed teetotaller. He presents this as a dichotomy, which is too neat, but the underlying point holds. Reactions are still relata – see what happens when you read too much philosophy and logic? The teetotaller has organised their life around the bottle as surely as the alcoholic has. Both are defined by it.

Opposition is one of influence’s favourite disguises.

The fair objection is that LLM influence may differ from other influences in kind rather than just in kind. Dostoyevsky is strange. Bernhard is strange to the point of pathology. A canonical prose style is idiosyncratic by definition, which is why it’s worth absorbing. In contrast, LLM output aims for plausible fluency and statistical centrality. Its pull may be more homogenising than the pull of a singular authorial sensibility.

That’s a real point. The environment in question has a centripetal force toward the mean that most literary influences lack.

But conceding the point doesn’t really rescue the panic. It just specifies the kind of influence involved. The mechanism remains identical to every other case of environmental absorption. And ‘this influence tends toward the generic’ is an ironically generic critique of a particular environment’s character rather than a claim that the environment is doing something ontologically unprecedented to the notion of authorship.

The question that actually matters aesthetically is not was this touched by AI? It is what did the writer do with the environment they inhabited? That’s always been the question. It remains the question. The machinery has changed; the problem of influence has not.

What the current schism actually reveals is not that AI is doing something new to writing. It’s that we’ve been operating with a fairy tale about what writing is. The fairy tale holds that voice is self-originating, that somewhere beneath the reading AND the editing AND the genre conventions AND the institutional pressures AND the decade of a particular editor’s feedback, there is a pristine you, unconditioned and pure, expressing itself directly onto the page.

This was always false. Writers have always been patchworks of absorbed environments. The only difference now is that one of the environments is a machine, and the machine is new enough that people haven’t yet learned to be comfortable with what it reveals about the rest.

The environment always wins. The only interesting question is which environments you choose, and what you make of them.