The LinkedIn consensus has spoken: if you used AI in the writing process, you are not the author. The position is stated with the confidence of someone who has never hired a ghostwriter, employed a research assistant, submitted to a heavy editor, or considered that the Gettysburg Address was almost certainly not written by Lincoln.

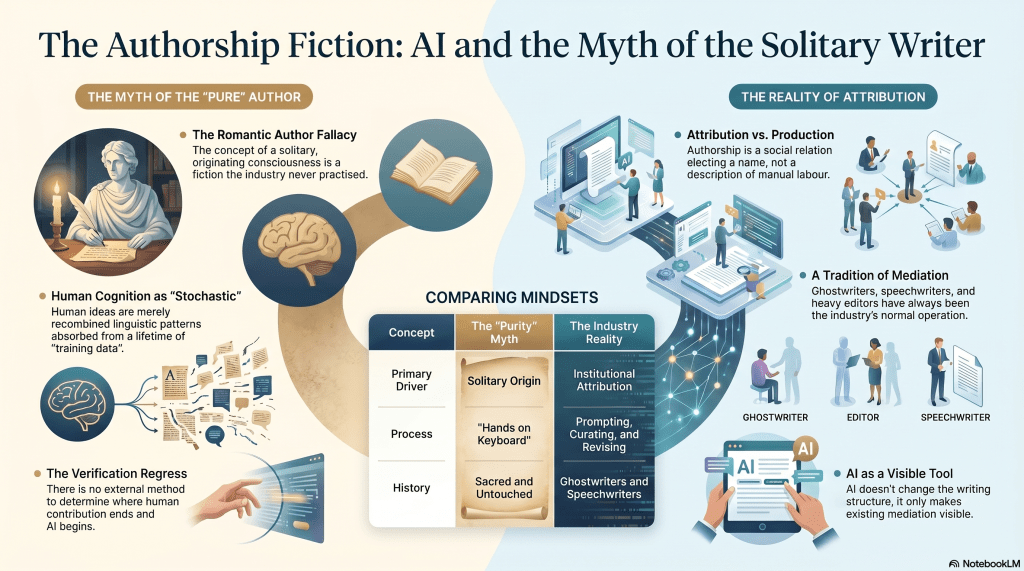

Authorship has never been a production relation. It has always been an attribution relation — an institutionally stabilised answer to the question of which name the practice elects to put on the cover. These are not the same thing, and conflating them is the error from which every subsequent confusion proceeds.

The ghostwriter has existed as long as commercial publishing. The political speechwriter is so normalised that nobody considers it worth remarking. The celebrity memoir, the corporate thought-leadership piece, the attributed editorial — these are not edge cases or embarrassing exceptions. They are the normal operation of every writing-adjacent industry that has ever existed. The name on the cover has never reliably indicated the hands on the keyboard, and the industry has never seriously pretended otherwise. It has simply preferred not to discuss it at dinner.

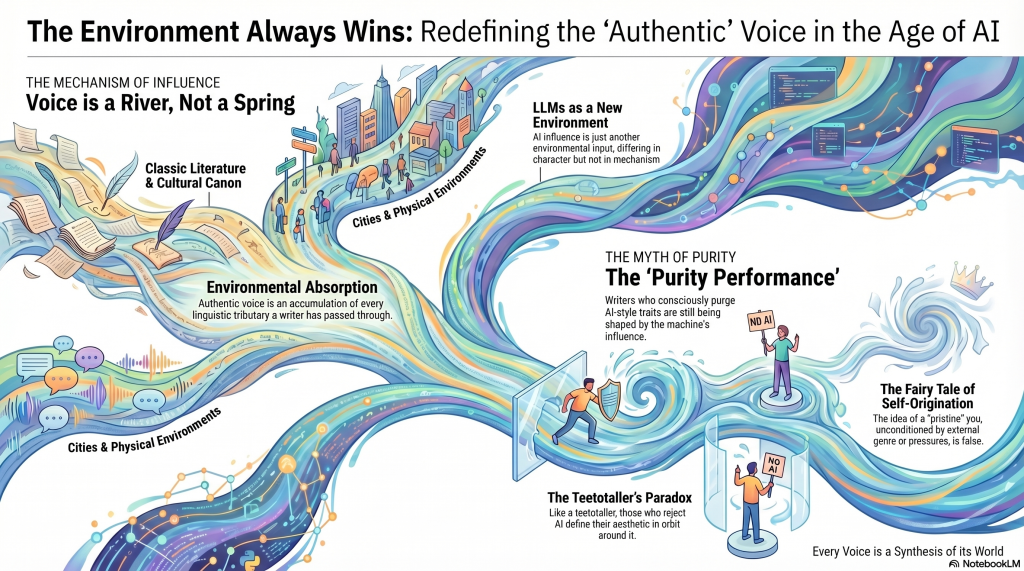

AI changes the tool. It does not change the structure. The person who prompts, selects, curates, revises, and publishes is doing what commissioners of ghostwriters have always done. What has changed is that AI makes the mediation visible in a way that polite convention previously concealed. Visibility triggers the purity reflex. What presents itself as a defence of authentic authorship is a defence of a particular fiction — the Romantic author as solitary originating consciousness — that the industry never consistently believed and certainly never consistently practised.

The purity position also fails on its own terms before it gets started. Consider the spectrum of AI-assisted writing: a full draft submitted for light polish; a human argument substantially revised by AI; collaborative ideation followed by AI drafting; a kernel of an idea handed over for full execution. These are genuinely different in terms of human contribution. The zealot position requires a threshold somewhere on this spectrum below which authorship lapses. It never specifies where. More fatally, it has no means of verification. There is no external method of determining where on the spectrum any given piece of writing falls. The detector tools are probabilistic noise that disproportionately penalise competent prose. Any audit mechanism sophisticated enough to catch first-order evasion immediately generates a second-order workaround. The regress terminates only at continuous surveillance of the writing process — panoptical authorship as the logical endpoint of the position taken seriously.

Then there is the recursion problem, which the zealot never addresses because it is fatal. The stochastic parrot charge against AI — that it merely recombines absorbed linguistic patterns without genuine origination — describes with considerable accuracy what human cognition also does. The writer’s training data is the Dickens read at ten, the billboard absorbed on a commute, the argument overheard on public transit, the half-remembered essay that shaped a position without ever being consciously cited. The causal chain of any human idea disappears into an unauditable cognitive history. Genuine origination in the sense the purity position requires has never existed. The Romantic author was always a retrospective confabulation. Barthes said so in 1967. The industry nodded politely and continued invoicing.

What the zealot is defending is not authorship. It is a particular grammar of authorship — one that selects compositional origin as the threshold criterion, applies it selectively and unverifiably, and uses the resulting suspicion as a status boundary. It is guild behaviour dressed as principle, which is understandable as a response to a genuine economic threat but should not be mistaken for a philosophical position.

Authorship is the position a culture elects to stabilise after the work has already been produced through far messier means. It has always been thus. AI did not break the fiction. It just made the fiction harder to keep a straight face about.

The Rest of the Story

I’ve written about this before. I am not an AI apologist, but I am peeved by anti-LLM zealots, who clearly haven’t thought through their arguments.

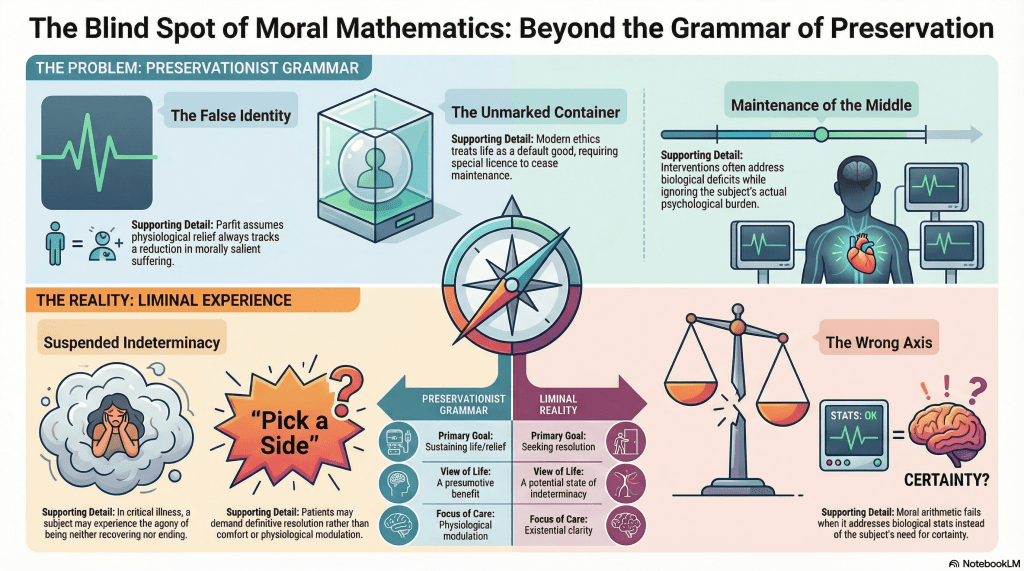

I finished reading A.J. Ayer’s Language, Truth, and Logic, the part about Bertrand Russell’s claim about ‘The author of Waverley was Scotch‘. My brain latched onto authorship, and my emotional response was WTF? I have other problems with Russell and Ayer on this, but that’s a matter for another day.

To make my point, this page up to the ellipsis is the output of Claude after an extended dialogue with it and ChatGPT after I read Ayers, and something didn’t sit quite right. I am not ashamed to use LLMs in my authoring workflow and am not ashamed to mention it, as here. Almost all of these thoughts are mine. I’ve simply asked Claude to organise the output. It’s good enough to output as-is, and any edits would be trivial, so I won’t bother. I probably could have made the edits in as much time as it took to type this, but I’ve got nothing to hide. I’m just a human with access to technology circa 2026.