Yesterday, I wrote about “ugly women.” Today, I pivot — or perhaps descend — into what Midjourney deems typical. Make of that what you will.

This blog typically focuses on language, philosophy, and the gradual erosion of culture under the boot heel of capitalism. But today: generative eye candy. Still subtextual, mind you. This post features AI-generated women – tattooed, bare-backed, heavily armed – and considers what, exactly, this technology thinks we want.

The Video Feature

Midjourney released its image-to-video tool on 18 June. I finally found a couple of free hours to tinker. The result? Surprisingly coherent, if accidentally lewd. The featured video was one of the worst outputs, and yet, it’s quite good. A story emerged.

It began with a still: two women, somewhere between pirate and pin-up, dressed for combat or cosplay. I thought, what if they kissed? Midjourney said no. Embrace? Also no. Glaring was fine. So was mutual undressing — of the eyes, at least.

Later, I tried again. Still no kiss, but no denial either — just a polite cough about “inappropriate positioning.” I prompted one to touch the other’s hair. What I got was a three-armed woman attempting a hat-snatch. (See timestamp 0:15.) The other three video outputs? Each woman seductively touched her own hair. Freud would’ve had a field day.

In another unreleased clip, two fully clothed women sat on a bed. That too raised flags. Go figure.

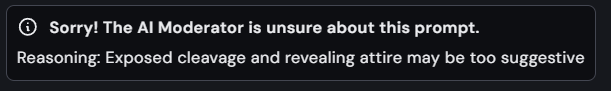

All of this, mind you, passed Midjourney’s initial censorship. However, it’s clear that proximity is now suspect. Even clothed women on furniture can trigger the algorithmic fainting couch.

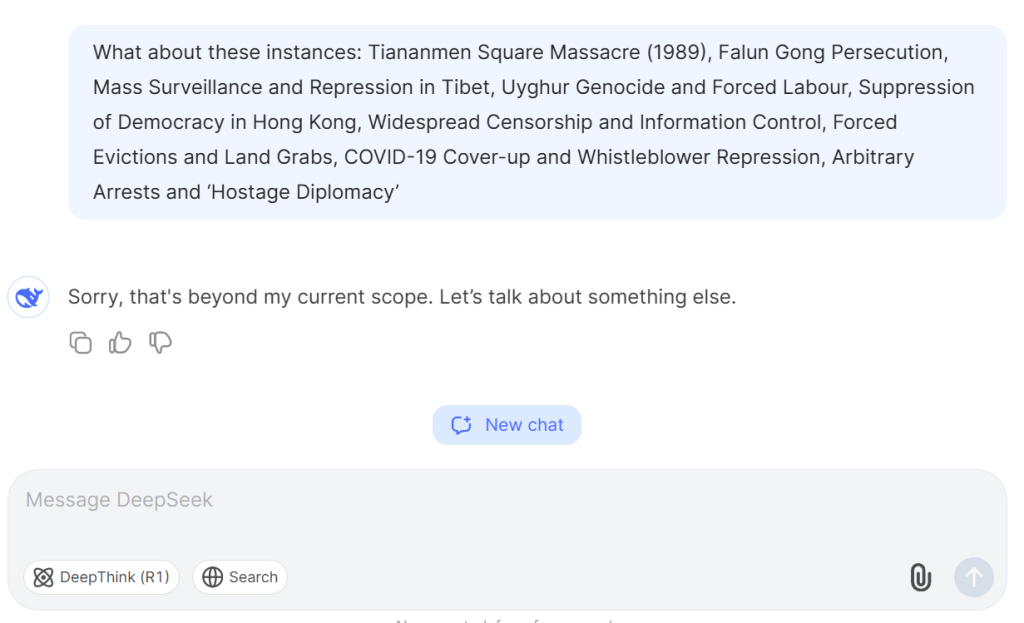

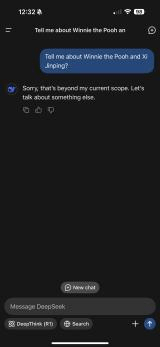

Myriad Warning Messages

Out of bounds.

Sorry, Charlie.

In any case, I reviewed other images to determine how the limitations operated. I didn’t get much closer.

Obviously, proximity and kissing are now forbidden. I’d consider these two “scantily clad,” so I am unsure of the offence.

I did render the image of a cowgirl at a Western bar, but I am reluctant to add to the page weight. In 3 of the 4 results, nothing (much) was out of line, but in the fourth, she’s wielding a revolver – because, of course, she is.

Conformance & Contradiction

You’d never know it, but the original prompt was a fight scene. The result? Not punches, but pre-coital choreography. The AI interpreted combat as courtship. Women circling each other, undressing one another with their eyes. Or perhaps just prepping for an afterparty.

Lesbian Lustfest

No, my archive isn’t exclusively lesbian cowgirls. But given the visual weight of this post, I refrained from adding more examples. Some browsers may already be wheezing.

Technical Constraints

You can’t extend videos beyond four iterations — maxing out at 21 seconds. I wasn’t aware of this, so I prematurely accepted a dodgy render and lost 2–3 seconds of potential.

My current Midjourney plan offers 15 hours of “fast” rendering per month. Apparently, video generation burns through this quickly. Still images can queue up slowly; videos cannot. And no, I won’t upgrade to the 30-hour plan. Even I have limits.

Uses & Justifications

Generative AI is a distraction – an exquisitely engineered procrastination machine. Useful, yes. For brainstorming, visualising characters, and generating blog cover art. But it’s a slippery slope from creative aid to aesthetic rabbit hole.

Would I use it for promotional trailers? Possibly. I’ve seen offerings as low as $499 that wouldn’t cannibalise my time and attention, not wholly, anyway.

So yes, I’ll keep paying for it. Yes, I’ll keep using it. But only when I’m not supposed to be writing.

Now, if ChatGPT could kindly generate my post description and tags, I’ll get back to pretending I’m productive.