Welcome to part 7, the last of a week-long series on the evolution and limits of language!

This article is part of a seven-day exploration into the fascinating and often flawed history of language—from its primitive roots to its tangled web of abstraction, miscommunication, and modern chaos. Each day, we uncover new layers of how language shapes (and fails to shape) our understanding of the world.

If you haven’t yet, be sure to check out the other posts in this series for a full deep dive into why words are both our greatest tool and our biggest obstacle. Follow the journey from ‘flamey thing hot’ to the whirlwind of social media and beyond!

The Present Day: Social Media and Memes – The Final Nail in the Coffin?

Just when you thought things couldn’t get any more chaotic, enter the 21st century, where language has been boiled down to 280 characters, emojis, and viral memes. If you think trying to pin down the meaning of “freedom” was hard before, try doing it in a tweet—or worse, a string of emojis. In the age of social media, language has reached new heights of ambiguity, with people using bite-sized bits of text and images to convey entire thoughts, arguments, and philosophies. And you thought interpreting Derrida was difficult.

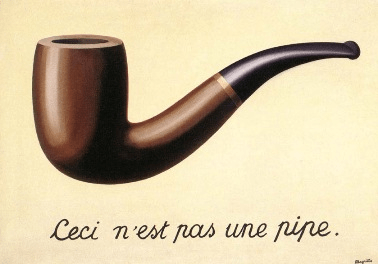

Social media has turned language into an evolving, shape-shifting entity. Words take on new meanings overnight, hashtags rise and fall, and memes become the shorthand for complex cultural commentary. In some ways, it’s brilliant—what better way to capture the madness of modern life than with an image of a confused cat or a poorly drawn cartoon character? But in other ways, it’s the final nail in the coffin for clear communication. We’ve gone from painstakingly crafted texts, like Luther’s 95 Theses, to memes that rely entirely on shared cultural context to make sense.

The irony is that we’ve managed to make language both more accessible and more incomprehensible at the same time. Sure, anyone can fire off a tweet or share a meme, but unless you’re plugged into the same cultural references, you’re probably going to miss half the meaning. It’s like Wittgenstein’s language games on steroids—everyone’s playing, but the rules change by the second, and good luck keeping up.

And then there’s the problem of tone. Remember those philosophical debates where words were slippery? Well, now we’re trying to have those debates in text messages and social media posts, where tone and nuance are often impossible to convey. Sarcasm? Forget about it. Context? Maybe in a follow-up tweet, if you’re lucky. We’re using the most limited forms of communication to talk about the most complex ideas, and it’s no surprise that misunderstandings are at an all-time high.

And yet, here we are, in the midst of the digital age, still using the same broken tool—language—to try and make sense of the world. We’ve come a long way from “flamey thing hot,” but the basic problem remains: words are slippery, meanings shift, and no matter how advanced our technology gets, we’re still stuck in the same old game of trying to get our point across without being completely misunderstood.

Conclusion: Language – Beautiful, Broken, and All We’ve Got

And here’s where the irony kicks in. We’ve spent this entire time critiquing language—pointing out its flaws, its limitations, its inability to truly capture abstract ideas. And how have we done that? By using language. It’s like complaining about how unreliable your GPS is while using it to get to your destination. Sure, it’s broken—but it’s still the only tool we have.

In the end, language is both our greatest achievement and our biggest limitation. It’s allowed us to build civilisations, create art, write manifestos, and start revolutions. But it’s also the source of endless miscommunication, philosophical debates that never get resolved, and social media wars over what a simple tweet really meant.

So yes, language is flawed. It’s messy, it’s subjective, and it often fails us just when we need it most. But without it? We’d still be sitting around the fire, grunting at each other about the ‘toothey thing’ lurking in the shadows. For better or worse, language is the best tool we’ve got for making sense of the world. It’s beautifully broken, but we wouldn’t have it any other way.

And with that, we’ve used the very thing we’ve critiqued to make our point. The circle of irony is complete.

◀ Previous | End