Perhaps not 100% because I’ve just spent hours chatting with several LLMs, complaining about the spate of purported AI detectors that tell me ‘this content shows a high similarity to AI-generated content’ or somewhat.

If I weren’t already familiar with the AI tells, I am now, as Claude reluctantly shared this:

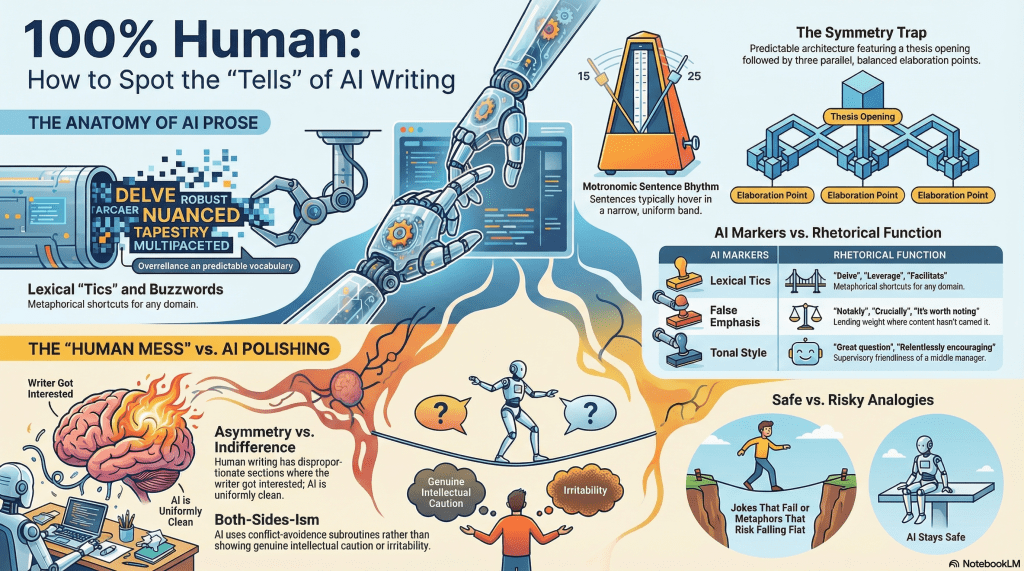

Lexical tics. The ones you’ve already flagged are real: “delve,” “robust,” “nuanced,” “multifaceted,” “landscape” (used metaphorically for any domain whatsoever), “tapestry,” “leverage” (as verb), “facilitate,” “underscores.” There’s also a tier of slightly subtler ones — “notably,” “crucially,” “it’s worth noting that,” “this is particularly important because” — which function as false-emphasis markers, lending rhetorical weight where the content doesn’t actually earn it.Structural patterns. This is where it gets more diagnostic than any single word choice. LLM prose tends toward a very particular architecture: a thesis-restating opening sentence, three to five parallel elaboration points (often with suspiciously balanced treatment), and a synthesising conclusion that circles back to the opening. The ratio of setup-to-substance is high. Everything gets contextualised before it gets said. There’s a compulsive both-sides-ism, a hedging reflex — “while X is certainly true, it’s also important to consider Y” — that reads less like genuine intellectual caution and more like a conflict-avoidance subroutine.

Tonal tells. LLM text tends to be relentlessly encouraging. There’s an affective flatness masquerading as warmth — everything is “great question,” everything “raises important considerations.” The prose never gets genuinely irritable, confused, bored, or reckless. It maintains a kind of supervisory friendliness, the register of a middle manager who’s been on a coaching course. Relatedly, there’s almost never a genuinely surprising analogy or a metaphor that risks falling flat — the figurative language is always safe, always legible, always serviceable.

Punctuation and rhythm. The em-dash point is real but often overstated. More telling is sentence-length uniformity — LLM prose tends to hover in a narrow band of 15–25 words per sentence, rarely producing either the genuinely terse or the genuinely complex-subordinated. Paragraph length is also strikingly regular. The prose breathes at metronomic intervals.

The absence of mess. Perhaps the deepest tell. Human writing — even polished human writing — contains asymmetries: a paragraph that runs long because the writer got interested, a point that gets dropped without resolution, a transition that’s slightly rough, a joke that doesn’t quite land but stays in anyway. LLM prose is clean in a way that signals not craft but indifference — nothing is there because the writer cared about it, so nothing is disproportionate.

Add to this grammar-checkers attempting to drive writing back to middle school formats – not to mention competing grammar checkers hypercorrecting each other. The struggle is real.

Of course, a solution might be just to review the content and undo these inclusions. Almost interestingly, I use Autocrit (but not often because it is too orthodox), but it does serve somewhat as an anti-AI agent, assessing rhythm and pace, plus the usual copyediting functions.

I prefer to draft in a stream-of-consciousness style. The AI organises my messes, so if I asked it to, it would remove or relocate my functional parenthetical about Autocrit. But I’m leaving it just to prove I’m human. Or did I add it to an AI-scripted piece? 🧐

Whilst I considered that I could either overdo AI or join the 54 per cent of Americans who write at a third-grade level. Grok suggested something even more sinister – Friggin Musk. It suggested that I double down on the AI likeness and make my content into an AI parody factory – overpopulate it with em-dashes, deving, and tapestry. Evidently, Carole King was AI before Suno.

In any case – and AI might suggest moving this to the top – the problem is that I now have an additional layer that interrupts my flow and process. It’s disconcerting, and I resent it. My psyche is disturbed to appease witchhunters. And it’s bollox.

The question is whether to succumb to the moral suasion or ignore the moral posturing.

This post contains no sugar, salt, fat, carbohydrates, protein, or fibre. No animals were harmed in the production of this blog. All proceeds will be donated to the Unicorn Recovery Foundation.