How Modern Thought Mistakes Its Own Grid for Reality

Modern thought has a peculiar habit.

It builds a measuring device, forces the world through it, and then congratulates itself for discovering what the world is really like.

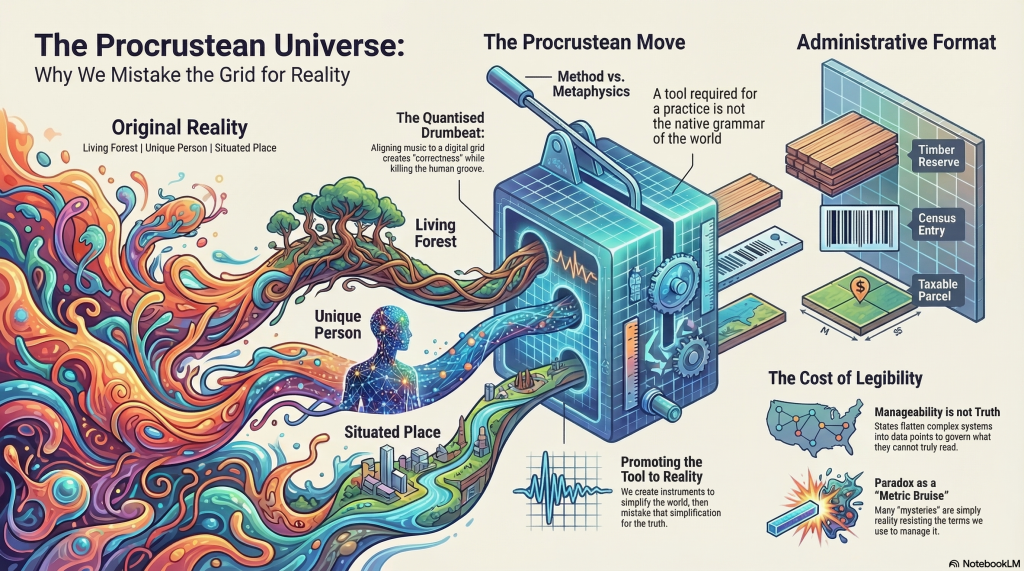

This is not always called scientism. Sometimes it is called rigour, precision, formalism, standardisation, operationalisation, modelling, or progress. The names vary. The structure does not. First comes the instrument. Then comes the simplification. Then comes the quiet metaphysical sleight of hand by which the simplification is promoted into reality itself.

Consider music.

A drummer lays down a part with slight drag, push, looseness, tension. It breathes. It leans. It resists the metronome just enough to sound alive. Then someone opens Pro Tools and quantises it. The notes snap to grid. The beat is now ‘correct’. It is also, very often, dead.

This is usually treated as an aesthetic dispute between old romantics and modern technicians. It is more than that. It is a parable.

Quantisation is not evil because it imposes structure. Every recording process imposes structure. The problem is what happens next. Once the grid has done its work, people begin to hear the grid not as a tool, but as truth. Timing that exceeds it is heard as error. The metric scaffold becomes the criterion of reality.

A civilisation can live like this.

It can begin with a convenience and end with an ontology.

Carlo Rovelli’s The Order of Time is useful here precisely because it unsettles the fantasy that time is a single smooth substance flowing uniformly everywhere like some celestial click-track. It is not. Time frays. It dilates. It varies by frame, relation, and condition. Space, too, loses its old role as passive container. The world begins to look less like a neat box of coordinates and more like an unruly field of relations that only reluctantly tolerates our diagrams.

This ought to induce some modesty. Instead, modern disciplines often respond by doubling down on the diagram.

That is where James C. Scott arrives, carrying the whole argument in a wheelbarrow. Seeing Like a State is not merely about states. It is about the administrative desire to make the world legible by reducing it to formats that can be counted, organised, compared, and controlled. Forests become timber reserves. People become census entries. Places become parcels. Lives become cases. The simplification is not wholly false. It is simply tailored to the needs of governance rather than to the fullness of what is governed.

That’s the key.

The state does not need the world in its density. It needs the world in a format it can read.

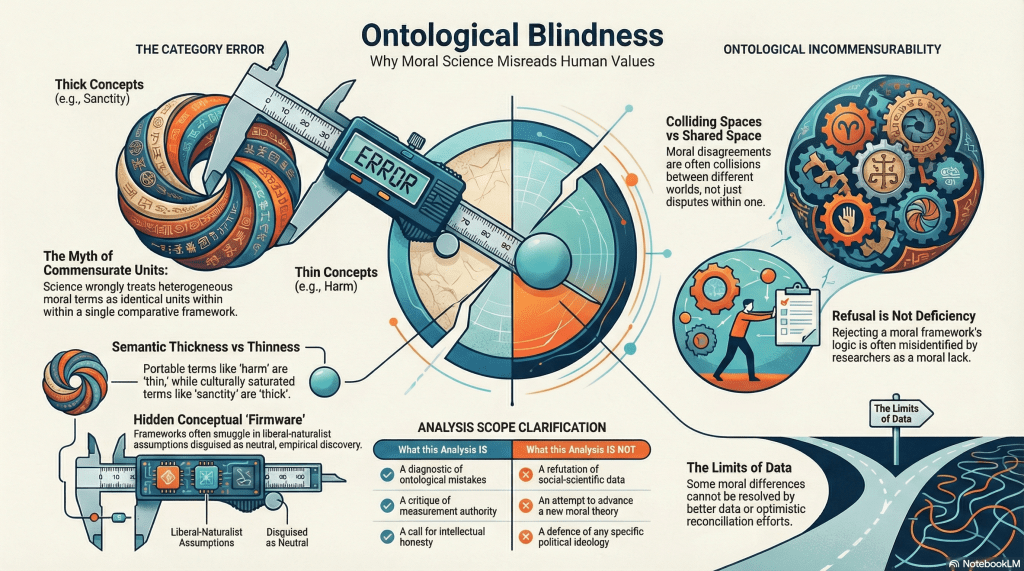

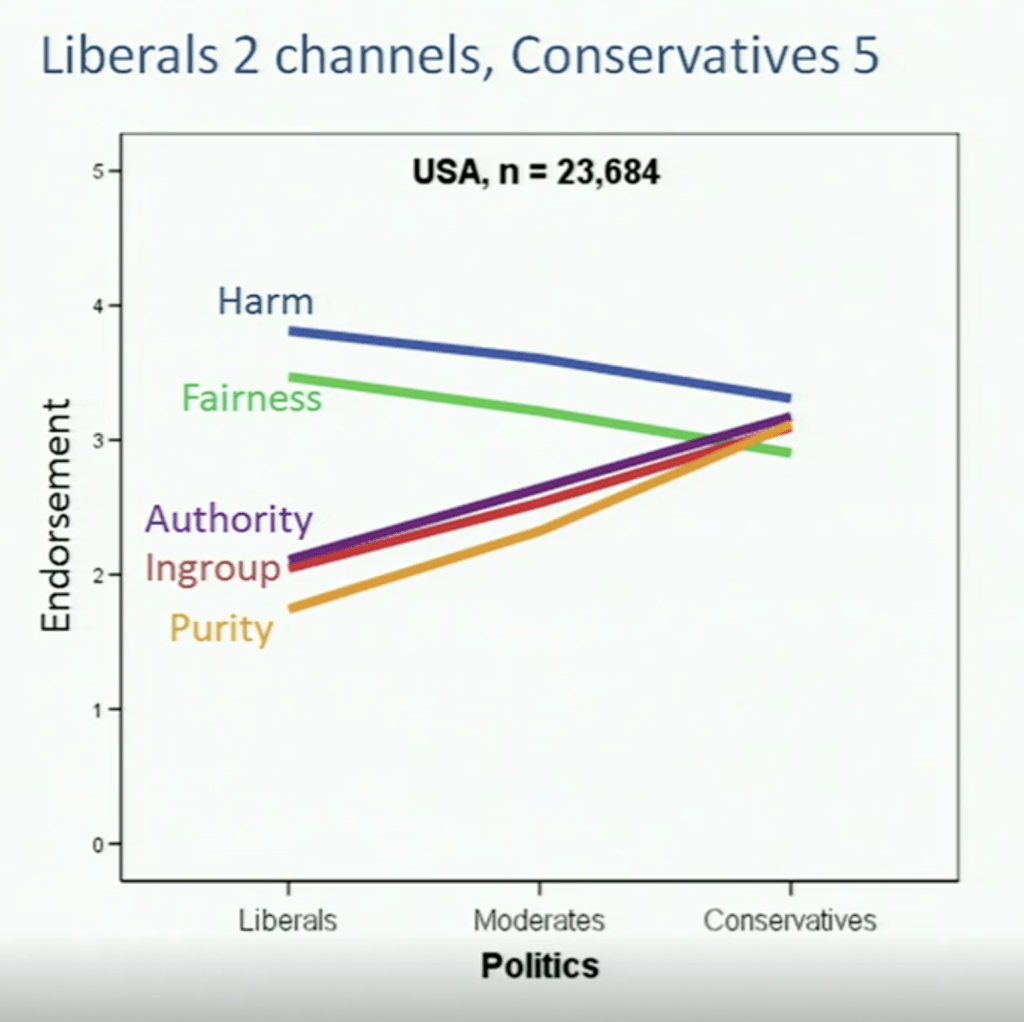

And modern disciplines are often no different. They require stable units, repeatable measures, abstract comparability, portable standards. Fair enough. No one is conducting physics with incense and pastoral reverie. But then comes the familiar conceit: what was required for the practice quietly becomes what reality is said to be. The discipline first builds the bed for its own survival, then condemns the world for failing to lie down properly.

This is the Procrustean move.

Cut off what exceeds the frame. Stretch what falls short. Call the result necessity.

Many supposed paradoxes begin here. Not in reality itself, but in the overreach of a measuring grammar.

I use a ruler to measure temperature, and I am surprised when it does not comport.

The example is absurd, which is why it is helpful. The absurdity is not in the temperature. It’s in the category mistake. Yet much of modern thought survives by committing more sophisticated versions of precisely this error. We use tools built for extension to interpret process. We use spatial metaphors to capture time. We use statistical flattening to speak of persons. We use administrative categories to speak of communities. We use computational tractability to speak of mind. Then the thing resists, and we call the resistance mysterious.

Sometimes it is not mysterious at all. Sometimes it is merely refusal.

The world declines to be exhausted by the terms under which we can most easily manage it.

That refusal then returns to us under grander names: paradox, irrationality, inconsistency, noise, anomaly. But what if the anomaly is only the residue of what our instruments were built to exclude? What if paradox is often the bruise left by an ill-fitted measure?

This is where realism, at least in its chest-thumping modern form, begins to look suspicious. Not because there is no world. There is clearly something that resists us, constrains us, embarrasses us, punishes bad maps, and ruins bad theories. The issue is not whether there is a real. The issue is whether what we call “the real” is too often just what our current apparatus can stabilise.

That is not realism.

That is successful compression mistaken for ontology.

Space and time, in this light, begin to look less like the universe’s native grammar and more like the interface through which a certain kind of finite creature renders the world tractable. Useful, yes. Necessary for us, perhaps. Final? hardly.

The same applies everywhere. We do not merely measure the world. We reshape it, conceptually and institutionally, until it better fits our preferred methods of seeing. Then we forget we did this.

Scott’s lesson is that states fail when they confuse legibility with understanding. Our broader civilisational lesson may be that disciplines fail in much the same way. They flatten in order to know, and then mistake the flattening for disclosure. What exceeds the frame is dismissed until it returns as contradiction.

None of this requires anti-scientific melodrama. Science is powerful. Measurement is indispensable. Standardisation is often the price of cumulative knowledge. The problem is not the existence of the grid. The problem is the promotion of the grid into metaphysics. A tool required for a practice is not therefore the native structure of the world. That should be obvious. It rarely is.

Scientism, in its most irritating form, begins precisely where this obviousness ends. It is not disciplined inquiry but disciplinary inflation: the belief that whatever can be rendered formally legible is most real, and whatever resists is merely awaiting capture by better instruments, finer models, sharper equations, more obedient categories. It is the provincial fantasy that the universe must ultimately speak in the accent of our methods.

Perhaps it doesn’t.

Perhaps our great achievement is not that we have discovered reality’s final language, but that we have become unusually good at mistaking our translations for the original.

Imagine that.