I like this bloke. Here, he clarifies Rorty’s perspective on Truth. I am quite in sync with Rorty’s position, perhaps 90-odd per cent.

Allow me to explain.

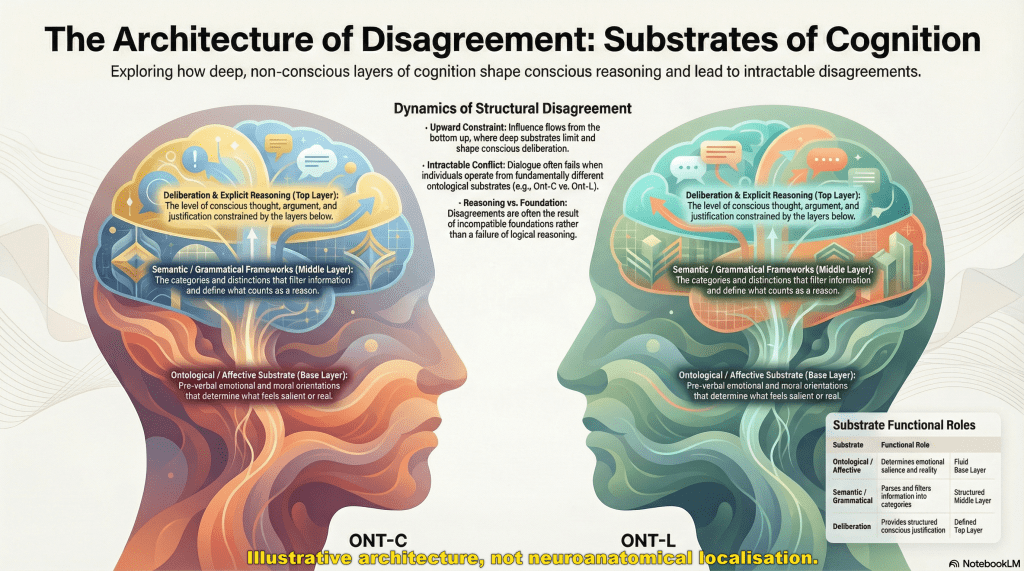

I have written about truth several times over the years, 1, 2, 3, and more. In earlier posts, I put the point rather bluntly: truth is largely rhetorical. I still think that captured something important, but it now feels incomplete. With the development of my Mediated Encounter Ontology of the World (MEOW) and the Language Insufficiency Hypothesis (LIH), the picture needs tightening.

The first step is to stop pretending that ‘truth’ names a single thing.

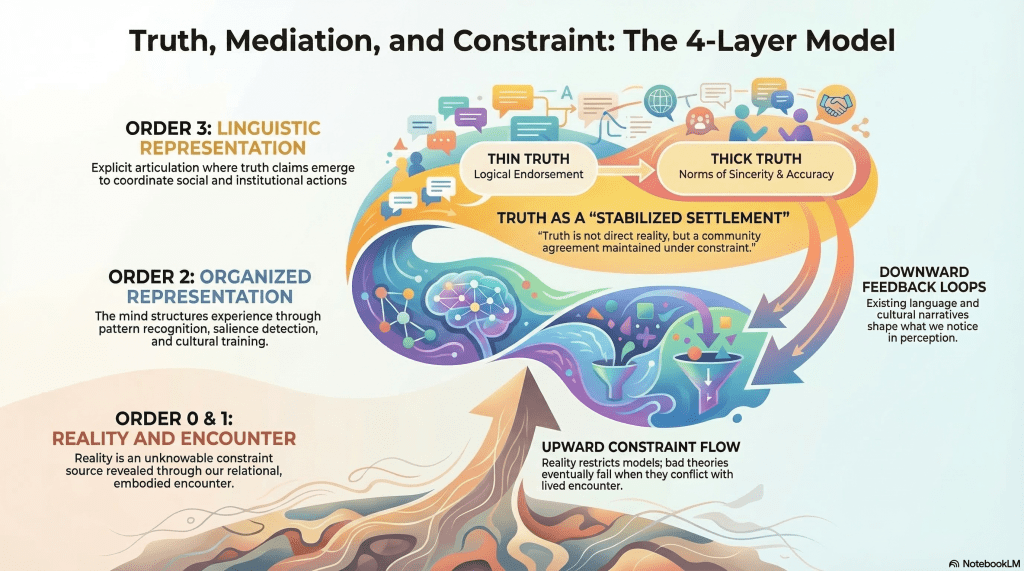

Philosopher Bernard Williams helpfully distinguished between thin and thick senses of truth in Truth and Truthfulness. The distinction is simple but instructive.

In its thin sense, truth is almost trivial. Saying ‘it is true that p’ typically adds nothing beyond asserting p. The word ‘true’ functions as a logical convenience: it allows endorsement, disquotation, and generalisation. Philosophically speaking, this version of truth carries very little metaphysical weight. Most arguments about truth, however, are not about this thin sense.

In practice, truth usually appears in a thicker social sense. Here, truth is embedded in practices of inquiry and communication. Communities develop norms around sincerity, accuracy, testimony, and credibility. These norms help stabilise claims so that people can coordinate action and share information.

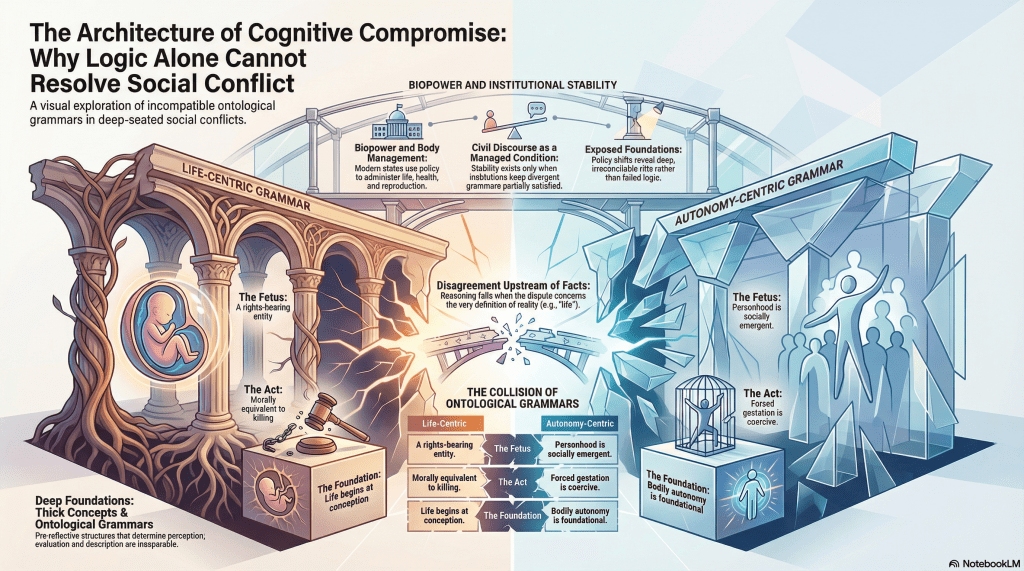

At this level, truth becomes something like a social achievement. A statement counts as ‘true’ when it can be defended, circulated, reinforced, and relied upon within a shared framework of interpretation. Evidence matters, but so do rhetoric, persuasion, institutional authority, and the distribution of power. This is the sense in which truth is rhetorical, but rhetoric is not sovereign.

Human beings can imagine almost anything about the world, yet the world has a stubborn habit of refusing certain descriptions. Gravity does not yield to persuasion. A bridge designed according to fashionable rhetoric rather than sound engineering will collapse regardless of how compelling its advocates may have been.

This constraint does not disappear in socially constructed domains. Institutions, identities, norms, and laws are historically contingent and rhetorically stabilised, but they remain embedded within material, biological, and ecological conditions. A social fiction can persist for decades or centuries, but eventually it encounters pressures that force revision.

Subjectivity, therefore, doesn’t imply that ‘anything goes’. It simply means that all human knowledge is mediated.

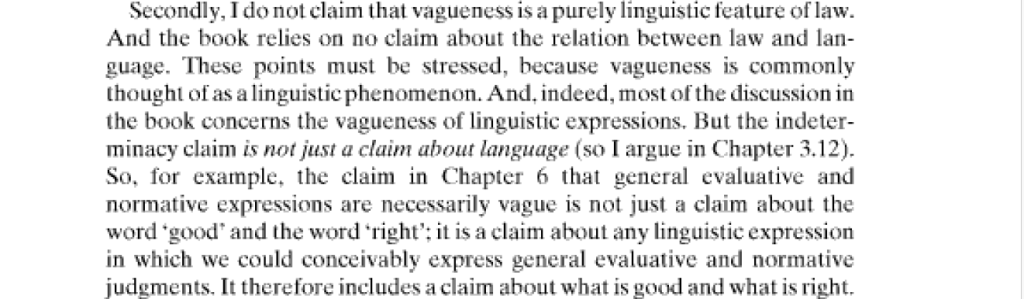

We encounter the world through perception, language, culture, and conceptual frameworks. Every description is produced from a particular standpoint, using particular tools, within particular historical circumstances. Language compresses experience and inevitably loses information along the way. No statement captures reality without distortion. This is the basic insight behind the Language Insufficiency Hypothesis.

At the same time, our descriptions remain answerable to the constraints of the world we inhabit. Some descriptions survive repeated encounters better than others.

In domains where empirical constraint is strong – engineering, physics, medicine – bad descriptions fail quickly. In domains where constraint is indirect – ethics, politics, identity, aesthetics – multiple interpretations may remain viable for long periods. In such cases, rhetoric, institutional authority, and power often function as tie-breakers, stabilising one interpretation over others so that societies can coordinate their activities. These settlements are rarely permanent.

What appears to be truth in one era may dissolve in another. Concepts drift. Institutions evolve. Technologies reshape the landscape of possibility. Claims that once seemed self-evident may later appear parochial or incoherent.

In this sense, many truths in human affairs are best understood as temporally successful settlements under constraint.

Even the most stable arrangements remain vulnerable to change because the conditions that sustain them are constantly shifting. Agents change. Environments change. Expectations change. The very success of a social order often generates the tensions that undermine it. Change, in other words, is the only persistence.

The mistake of traditional realism is to imagine truth as a mirror of reality – an unmediated correspondence between statement and world. The mistake of crude relativism is to imagine that language and power can shape reality without limit. Both positions misunderstand the situation.

We do not possess a final language that captures reality exactly as it is. But neither are we free to describe the world however we please. Truth is not revelation, and it is not mere invention.

It is the provisional stabilisation of claims within mediated encounter, negotiated through language, rhetoric, and institutions, and continually tested against a world that never fully yields to our descriptions. We don’t discover Truth with a capital T. We negotiate survivable descriptions under pressure.