Preamble

All too often, I’ll read or listen to a book and place bookmarks with the best of intents to revisit and comment. yet either never to return or to return and not recall the context and not wanting to reread to regain it. I am going to attempt to document my reaction to Jonathan Haidt’s book, The Righteous Mind: Why Good People are Divided by Politics and Religion. If you’ve read some posts here, you’ll understand that I am not a moralist, so I don’t expect to like the book or agree with it. I’ve already ready the forward materials, so I’ll return to comment on that before I get too far ahead. I have done this before at university, and it is decidedly slow progress and can chase one down rabbit holes—this one, anyway.

I have a habit of abandoning books in favour of others including dropping them outright. This is one of 16 I have in progress at the moment, some commenced as many as 5 years ago. To be fair to myself, many of those books are substantially completed. I feel I got the intended message—or at least got what I wanted out of them—, and I just haven’t read the final few chapters. In some cases, the book is an anthology, and I have been slogging my way through it. A few books I’ve read before and am reabsorbing the material, so I may decide not to re-read cover to cover. I just pulled a second reading book off the list to get to 16 from 17.

I have striven not to laugh at human actions, not to weep at them, not to hate them, but to

understand them.

— Baruch Spinoza, Tractatus Politicus, 1676

Introduction

“Can we all get along?” — Rodney King

“Please, we can get along here. We all can get along. I mean, we’re all stuck here for a while. Let’s try to work it out.”

Born to be Righteous

I could have titled this book The Moral Mind to convey the sense that the human mind is designed to “do” morality, just as it’s designed to do language, sexuality, music, and many other things described in popular books reporting the latest scientific findings.

Empasis mine

Straight away, I have a contention. The human mind is not designed to do anything. It has evolved and performs functions. Perhaps, this is just a matter of semantics, but it puts me on guard. Moreover, that it does morality doesn’t evaluate the relative benefit or if it should even be done. Without going down the aforementioned rabbit hole, language is a perfect example. We use language to communicate, but language as a social mechanism may be a secondary or tertiary function. As I’ve argued—even quite recently—, this is a reason I feel that language is insufficient for the purpose of conveying abstract concepts, like for example, morals and morality.

But I chose the title The Righteous Mind to convey the sense that human nature is not just intrinsically moral, it’s also intrinsically moralistic, critical, and judgmental.

A primary function of the brain is as a difference engine. This is what allows us to discern friend from foe, edible versus poison, and so on. Reflecting on Kahneman and Tversky, most (if not ostensibly all) of this is a heuristic system I process, which is good enough but only at a distance. Morals allow us to create in-group and out-group distinctions.

I want to show you that an obsession with righteousness (leading inevitably to self-righteousness) is the normal human condition. It is a feature of our evolutionary design, not a bug or error that crept into minds that would otherwise be objective and rational.

To my first point—not only his insistence on a design metaphor, but doubling down and declaring it as not a bug or an error—, this is disconcerting. And it may be a normal human condition, but so is cancer. The appeal to nature isn’t winning me over.

Our righteous minds made it possible for human beings—but no other animals—to produce large cooperative groups, tribes, and nations without the glue of kinship.

Agreed.

What Lies Ahead

Part I is about the first principle: Intuitions come first, strategic reasoning second.

If you think that moral reasoning is something we do to figure out the truth, you’ll be constantly frustrated by how foolish, biased, and illogical people become when they disagree with you. But if you think about moral reasoning as a skill we humans evolved to further our social agendas—to justify our own actions and to defend the teams we belong to—then things will make a lot more sense.

Haidt and I are much aligned on these points.

Keep your eye on the intuitions, and don’t take people’s moral arguments at face value. They’re mostly post hoc constructions made up on the fly, crafted to advance one or more strategic objectives.

Not buying the ‘go with your intuitions‘ advice. Moving on.

…the mind is divided, like a rider on an elephant, and the rider’s job is to serve the elephant … I developed this metaphor in my last book, The Happiness Hypothesis.

I’m not sure I am going to like this dualism, and I haven’t read The Happiness Hypothesis, so I’ll just have to see where he takes it. It seems like Haidt is a hardcore Traditionalist.

Part II is about the second principle of moral psychology, which is that there’s more to morality than harm and fairness.

This feels about right.

The central metaphor of these four chapters is that the righteous mind is like a tongue with six taste receptors.

OK. Let’s see where this goes.

Part III is about the third principle: Morality binds and blinds.

I like this pair.

…human beings are 90 percent chimp and 10 percent bee.

Did he say bee? I agree with the chimp reference. Maybe this won’t be as bad as I thought.

A note on terminology: In the United States, the word liberal refers to progressive or left-wing politics, and I will use the word in this sense. But in Europe and elsewhere, the word liberal is truer to its original meaning—valuing liberty above all else, including in economic activities. When Europeans use the word liberal, they often mean something more like the American term libertarian, which cannot be placed easily on the left-right spectrum.10 Readers from outside the United States may want to swap in the words progressive or left-wing whenever I say liberal.)

Decent advice.

Why do you see the speck in your neighbor’s eye, but do not notice the log in your own eye? … You hypocrite, first take the log out of your own eye, and then you will see clearly to take the speck out of your neighbor’s eye.

— MATTHEW 7:3–5

I do find myself, probably too often, parroting this paragraph.

PART I

Intuitions Come First, Strategic Reasoning Second

Central Metaphor: The mind is divided, like a rider on an elephant, and the rider’s job is to serve the elephant.

Where Does Morality Come From?

A family’s dog was killed by a car in front of their house. They had heard that dog meat was delicious, so they cut up the dog’s body and cooked it and ate it for dinner. Nobody saw them do this.

A man goes to the supermarket once a week and buys a chicken. But before cooking the chicken, he has sexual intercourse with it. Then he cooks it and eats it.

TBD

The Origin of Morality

Quick reaction for now. Details to follow…

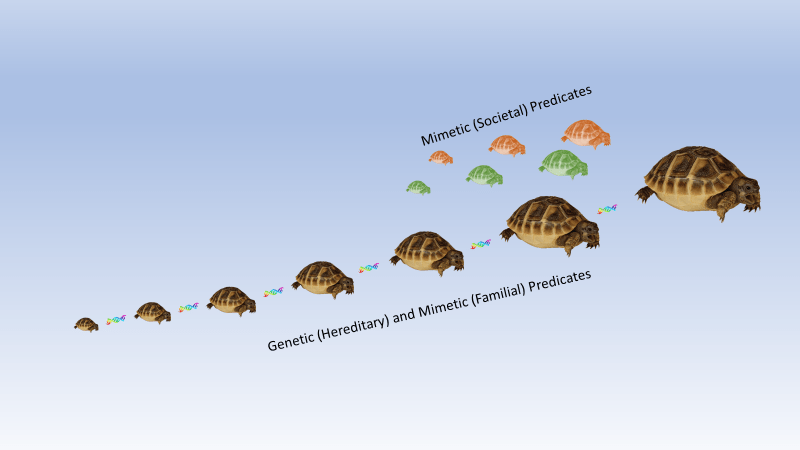

I’m not quite buying into Haidt’s attempt to parse the nature versus nature argument into three segments: nativism and empiricism whilst adding rationalism insomuch as rationalism is seen by many as ambiguous and not a mutually exclusive option. It feels as though he’s throwing up a rationalist strawman to take down. We’ll see where it leads

Nativism

the theory that concepts, mental capacities, and mental structures are innate rather than acquired by learning.

Empiricism

the theory that all knowledge is derived from sense-experience.

Rationalism

the theory that reason rather than experience is the foundation of certainty in knowledge.

Let’s pick up on this later. I knew this would take a lot longer.