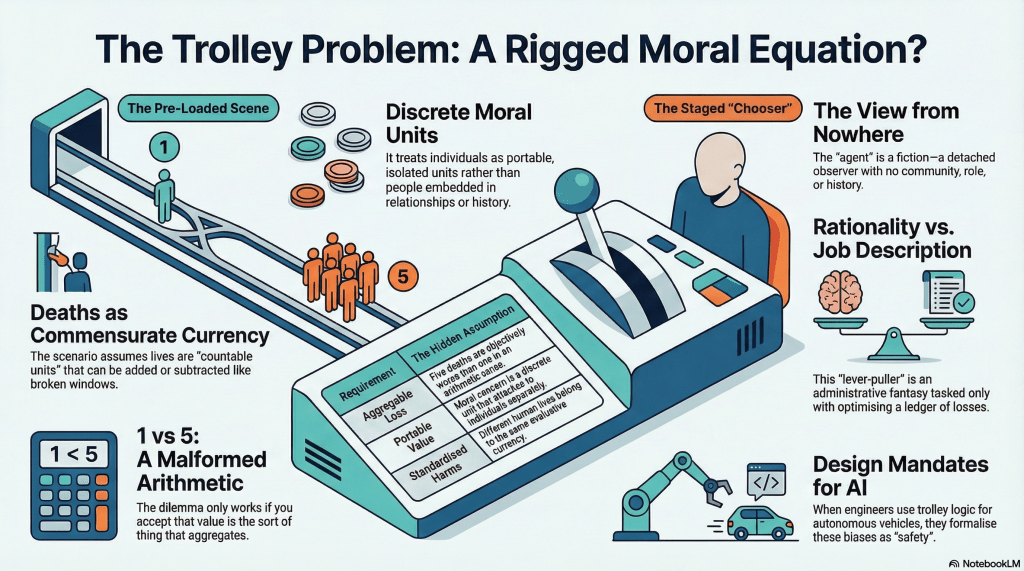

The trolley problem is not a neutral test of moral judgment. It’s a borrowed ontology, transmogrified into a moral test. Before anyone reasons about anything, the scene has already decided what sort of things there are to reason about: discrete persons, countable lives, comparable harms, and a chooser licensed to survey them from nowhere in particular.

What follows from it isn’t a clarification of moral principle but a rehearsal within terms already set.

The Scene Is Already Loaded

The standard trolley case presents itself as raw moral data – a clean dilemma, stripped of the mess of the real world, offered up for principled adjudication. It is nothing of the sort.

Before you are invited to reason, the scenario has already done substantial philosophical work on your behalf. It’s individuated persons into discrete units. It has rendered their lives countable. It’s made their deaths commensurable – one loss weighed against five, as though the comparison were as natural as subtraction. And it’s structured the whole affair as a problem of adjudication: here are the facts, now judge.

None of this is neutral. Every one of those moves is a substantive ontological commitment dressed up as stage direction.

Take commensurability alone. The question ‘should you divert the trolley to kill one instead of five?’ only functions as a dilemma if those deaths belong to the same evaluative currency. If they don’t – if, say, the value of a life isn’t the sort of thing that submits to arithmetic – then the problem is not difficult. It is malformed. The anguish it is supposed to provoke is an artefact of its own framing, not a discovery about ethics.

The maths is real enough. What’s dubious is the ontology that made the arithmetic possible.

The Chooser Is a Staged Fiction

The scene isn’t the only thing preformatted. What about the agent?

The trolley chooser stands outside the situation, surveys the options, and selects. They are not embedded in a community, encumbered by role, constrained by relationship, or shaped by history. They’re a pure point of detached rational adjudication – the moral equivalent of a view from nowhere.

The point isn’t that no one ever chooses under pressure. Of course, they do. The point is that the trolley problem presents detached adjudication as though it were the natural form of moral intelligence. As though stripping away context, relationship, role, and history were a way of clarifying moral reasoning rather than of impoverishing it beyond recognition.

The solitary lever-puller, surveying outcomes from above, isn’t morality stripped to its essentials. It’s modern administrative fantasy.

They’re the civil servants of ethical theory: contextless, disembodied, tasked only with optimising a ledger they didn’t write and can’t question. The scenario doesn’t merely place them in a difficult position. It constructs them as the kind of agent for who(m) moral life consists of exactly this: tallying comparable losses under time pressure and choosing the smaller number.

That isn’t the human condition. It’s a job description.

The Grammar Is Borrowed

It gets worse.

It’s one thing to say that trolley problems are structured rather than neutral. Most thought experiments are structured. Simplification is the point. The real indictment isn’t that the trolley case has assumptions, but that it has these assumptions – and that they are not universal features of moral reasoning but the inherited furniture of a very particular intellectual tradition.

Consider what the scenario requires you to accept before you even begin deliberating:

- That persons are discrete, portable units of moral concern. That value is the sort of thing that attaches to them individually and can be summed across them.

- That losses are aggregable and commensurate – five deaths are worse than one in the same way that five broken windows are worse than one.

- That ethical judgement, at its most serious, takes the form of an isolated decision-maker surveying comparable outcomes and selecting among them.

This is not the skeleton of rationality itself. It is a picture – modern, liberal, administrative – of what rationality looks like when it has been formatted for a particular kind of governance. The trolley problem does not merely presuppose an ontology. It presupposes this one.

And the trick – the real laundering – is that it presupposes it so thoroughly that the presupposition becomes invisible. Respondents argue furiously about whether to pull the lever, push the fat man, or stand paralysed by principle, without ever noticing that the terms of the argument were installed before they arrived. The metaphysics entered the room disguised as a trolley schedule.

What Trolley Problems Actually Reveal

If all of this is right, then the usual interpretation of trolley responses has the direction of explanation backwards. The standard reading goes something like: present a moral dilemma, observe the response, infer a moral principle. Consequentialists pull the lever. Virtue ethicists pose. Stoics watch. Deontologists don’t pull the level on principle alone. The disagreement reveals something about the structure of moral thought.

But if the scene is already ontologically loaded, and the chooser already formatted for a particular style of deliberation, then what the response reveals isn’t an independently accessed moral truth. It’s the respondent’s prior comfort with the ontological grammar that the case has already installed. Those who pull the lever are not discovering that consequences matter. They are confirming that the grammar of aggregable, commensurable lives is one they already inhabit. Those who refuse aren’t discovering that persons are inviolable. They are resisting, perhaps inarticulately, a grammar that does not match the one they brought into the room.

The disagreement is real. But it’s not a disagreement about what’s right. It is a disagreement about what there is – about what a person is, what a life is, whether value aggregates, whether agency is the sort of thing that can be exercised from nowhere. It’s an ontological dispute conducting itself in moral attire.

Trolley problems don’t tell us what’s right. They tell us what we already think there is to count. This matters beyond moral philosophy. The moment trolley logic is recruited for autonomous vehicles, military robotics, or triage systems, its hidden ontology ceases to be a parlour-game inconvenience and becomes a design mandate. Engineers do not escape the metaphysics of the scene. They inherit it, formalise it, and call the result safety. That may be the more urgent article.

The next question is not whether a self-driving car should kill one pedestrian rather than five. It is how such a machine came to inherit a world in which persons appear as countable units, harms as optimisable variables, and moral action as a problem of detached calculation in the first place.